TVPaint Animation

The digital solution for your professional 2D animation projects.

Advanced any-to-any voice conversion via Wav2Vec 2.0 and cross-attention fragment customization.

FragmentVC represents a pivotal advancement in the domain of Any-to-Any Voice Conversion (VC). Unlike traditional models that rely on rigid speaker embeddings or bottle-neck features, FragmentVC utilizes a latent representation framework derived from pre-trained Wav2Vec 2.0 models. Its core technical architecture employs a cross-attention mechanism that aligns source phonetic 'fragments' with target speaker characteristics. This allows for high-fidelity voice cloning even with minimal data from a target speaker, a process known as zero-shot learning. By 2026, FragmentVC has transitioned from a purely academic repository into a foundation for various enterprise-grade voice modulation tools. It remains highly regarded in the research community for its ability to maintain phonetic consistency while achieving near-perfect identity transfer. The model specifically addresses the 'over-smoothing' problem common in neural vocoders by focusing on the granular structure of speech sounds, making it a critical asset for developers building real-time translation and personalized AI communication platforms.

FragmentVC represents a pivotal advancement in the domain of Any-to-Any Voice Conversion (VC).

Explore all tools that specialize in zero-shot learning. This domain focus ensures FragmentVC delivers optimized results for this specific requirement.

Leverages self-supervised speech representations to capture robust phonetic features regardless of speaker noise.

The model calculates the similarity between source and target fragments to reconstruct the target's voice.

Architected to convert voices of speakers that were never seen during the training phase.

Separates the 'what' (content) from the 'who' (identity) in the latent space.

Optimized to work with high-fidelity vocoders for crystal-clear output.

Ability to blend characteristics from multiple target speakers into a single output.

Designed for efficient inference on consumer-grade NVIDIA GPUs.

Clone the FragmentVC repository from GitHub.

Create a virtual environment using Python 3.8+.

Install PyTorch and Torchaudio with CUDA support for GPU acceleration.

Install dependencies including Fairseq and Librosa.

Download the pre-trained Wav2Vec 2.0 base model.

Download the FragmentVC pre-trained model weights.

Prepare your source and target audio files (16kHz mono recommended).

Configure the inference script with the paths to the models and audio.

Run the conversion script to generate the latent audio fragments.

Utilize a vocoder (like HiFi-GAN) to synthesize the final output waveform.

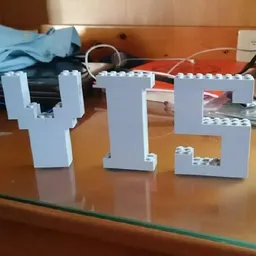

All Set

Ready to go

Verified feedback from other users.

"Highly praised by audio engineers for its zero-shot capabilities and natural prosody, though technical setup is challenging for non-developers."

Post questions, share tips, and help other users.

The digital solution for your professional 2D animation projects.

Empowering independent artists with digital music distribution, publishing administration, and promotional tools.

Convert creative micro-blogs into high-performance web presences using generative AI and Automattic's core infrastructure.

Fashion design technology software and machinery for apparel product development.

Instantly turns any text to natural sounding speech for listening online or generating downloadable audio.

Professional studio-quality AI headshot generator for individuals and teams.